Using analytics to promote diversity and inclusion

My company has been in existence for just over six years with a focus on helping employers recruit qualified and diverse early career talent.

For the first several years, employers always came to us saying that their Diversity and Inclusion (D+I) challenges were at the “top of the funnel” — that is, not enough women, minorities, Veterans (and so on) were applying to their jobs in the first place.

To address that challenge, we spent time and effort building an industry-leading early career D+I sourcing platform.

However, after helping millions of candidates find and apply for positions, we realized something that most companies have a hard time admitting: the problem often wasn’t just the top of the recruitment funnel, but really the entire hiring process itself.

We would send hundreds (or thousands) of diverse candidates to a client, but at each stage in the hiring pipeline, the representation of diverse candidates would drop.

Companies love to point the finger at the candidate flow as the issue, but it was usually much more than just that when we looked at the data.

So, we committed the last several years to build D+I analytics dashboards (along with a fairer and compliant screening platform) that would assist our clients with uncovering their hidden biases so that they could make changes to develop a more equitable hiring process.

Our clients now can analyze their overall D+I performance in several different ways: one dashboard walks you through how candidates of different genders and races are getting through your hiring process; one shows you which knockout questions/criteria are more likely to be knocking out minority applicants at the highest rates; one that helps you identify schools that are sending you quality and diverse candidates, and many more.

Biased job descriptions

“Biased” job descriptions, or job descriptions with tainted language that tend to attract or repel certain demographics, are a major barrier to hiring diverse talent. If your job description is biased, it essentially restricts who your company will hire because the language affects who decides to apply to your job. While not deliberate, this is something we see from many companies, both large and small.

After all, it doesn’t matter how much time, effort, or money you spend on getting your company in front of a diverse audience if, at the end of the day, your job description turns off the very candidates you’re trying to attract.

There are many types of bias in job descriptions. One common form comes in the presence of singular words, like “ninja,” “rock star,” or “guru,” which, according to Mediabistro, give female candidates the impression that your company is male-dominated. Using language like this across your roles can cause a serious decline in female applicants entering your organization’s hiring funnel.

Not only can this increase your company’s time-to-hire, but it also hinders your company from reaching your diversity goals (thereby hurting your chances of creating a better workplace). Textio identified a handful of other common phrases that “exert a bias effect” but usually don’t show up on any qualitative checklists. Masculine-tone phrases include “exhaustive,” “fearless,” and “enforcement,” whereas “transparent,” “catalyst,” and “in touch with” result in a more feminine-friendly tone.

But where there’s a challenge, there’s a solution, and it comes in the form of actionable analytics.

Real time solution

I don’t believe that any company that cares about recruiting a more diverse employee base can succeed without a strong real-time analytics program. Without analytics, it’s usually impossible to diagnose where your gaps truly exist properly.

Therefore, anecdotes from Hiring Managers will drive strategy — leading your team members to spend time in ways that are not only wasteful but even counterproductive.

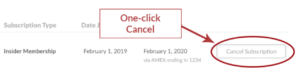

Instead, by making data-driven decisions to guide your strategy, you can objectively identify where diverse candidates drop off in the hiring funnel and make changes to your process with confidence that your work will have a real impact. With our new Job Description Gender Bias dashboard, our platform tracks the drop-off between views and applicants for your roles by gender.

For example, at any moment in your hiring cycle, you can see the percent of female viewers against the percent of female applicants for each job listing, determining your job post’s Bias Score. This score (1-10) will help you easily identify which job descriptions have the most gender bias.

The higher the score, the more biased the job description. Bias can go both ways, and so our Bias Score detects bias both for and against women. This way, instead of sitting and guessing at why you’re not getting enough female or male applicants for specific roles, you get real data along the way — enabling you to make adjustments as you see fit.

Data leads the way

To reiterate, talent is equally distributed, but opportunity is not — and using real-time analytics can help ensure that opportunity extends to all candidates and not only a select few. After all, data is always telling us a story.

What story does your hiring funnel data currently tell about your company, and is it the story you’d like to be telling?

Free Training & Resources

White Papers

Provided by Conifer Health

White Papers

Provided by TriNet

White Papers

Provided by Anaplan

Resources

Premium Articles

Case Studies

Premium Articles

Test Your Knowledge